According to the USA’s National Oceanic and Atmospheric Administration (NOAA), Americans are increasingly demanding more accurate and detailed weather and climate forecasts. As a result, weather and climate models are becoming more complex and moving to higher resolutions.

Although there is a wealth of process-level expertise across the US weather and climate community, for it to be truly effective it needs to be tapped into and brought closer to the model development activities led by NOAA and at the National Center for Atmospheric Research (NCAR).

The Model Diagnostics Task Force (MDTF) funded by NOAA’s Modeling, Analysis, Predictions and Projections (MAPP) program created new diagnostics software to capture that information in the community and make it available as and when required for the development of new weather and climate models.

One of the project’s main goals is to compile community-developed tools to analyze weather and climate data in a simple-to-run, flexible framework so that users can focus more on science and less on (re)writing code for similar analyses performed by the weather and climate science community.

Experts in the community submit proposals to write a process-oriented diagnostic (POD). If their proposal is selected, they work with the framework development team to develop the POD and ensure it runs on model output from NOAA’s Geophysical Fluid Dynamics Laboratory (GFDL) and NCAR.

What does the software do?

The new software provides detailed analyses, maps and figures of how well models are performing and whether or not the underlying model dynamics and physics are behaving as they should. John Krasting, physical scientist at GFDL’s Ocean and Cryosphere Division, is overseeing its development. “We feed model output into the diagnostic framework driver; the software package determines what analyses to run and presents the results as a web page for a researcher or model developer to review,” he explains.

“We have diagnostics that look at different parts of the Earth system, including the atmosphere,

ocean, land and sea ice,” Krasting continues. “The diagnostics look at very fast processes occurring on hourly timescales all the way up to decadal-to-centennial-scale processes.”

The software runs PODs written in Python and NCAR Command Language (NCL) on model output. Each POD script targets a specific physical process or emergent behavior, with the goals of determining how accurately the model represents that process, ensuring that models produce the right answers for the right reasons, and identifying gaps in the understanding of phenomena.

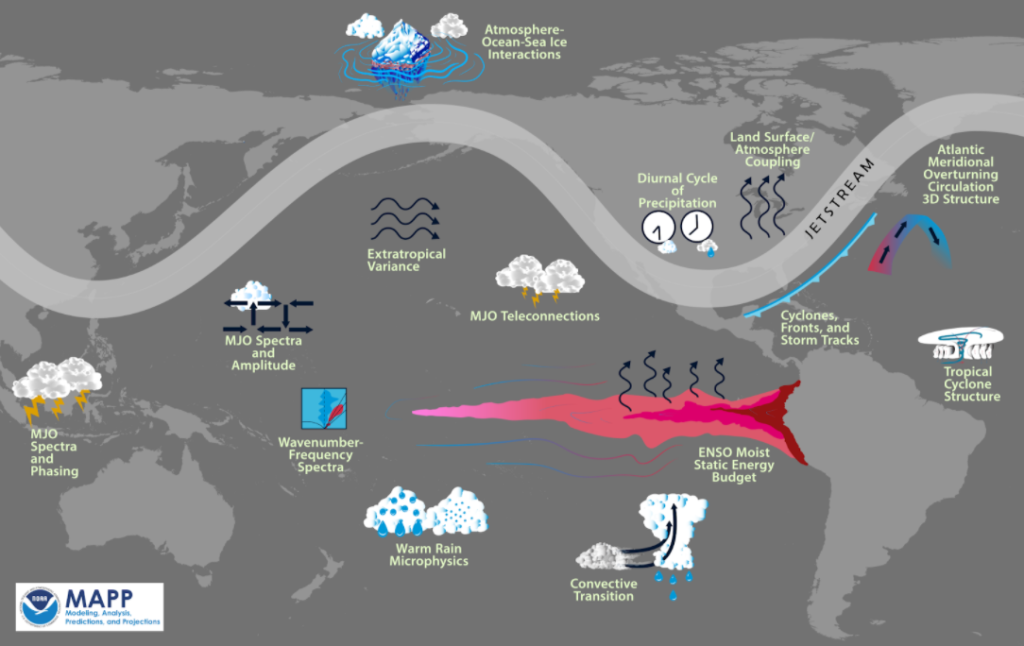

PODs span the climate realm (atmosphere, land surface, ocean, ice) in both time (diurnal-to-interannual) and space (point-to-planetary). They target a variety of physical processes including cyclone lifetimes, warm rain microphysics, Atlantic Meridional Overturning Circulation 3D structures, radiative feedbacks of climate forcings, radiative changes under Arctic sea-ice loss, mixed layer depths and sea-ice concentrations. Also covered are tropical cyclone rain rate characteristics, sea level rise, Madden-Julian Oscillation (MJO) propagation, MJO teleconnections, tropical wave amplitude and variability, soil moisture-evapotranspiration and its diurnal cycle, and precipitation-moisture relationships.

An essential aspect of the software is the distributed and communal way in which it has been developed. It is housed in a government laboratory (GFDL), which is working with another federally funded laboratory (NCAR, funded by the National Science Foundation), and the diagnostic components of the software are developed by the broad community, both federal and non-federal.

The competitive process through which contributors are selected ensures that the best minds are working

on the most useful contributions to the software. Furthermore, the way in which development is structured solves significant incentive issues. For example, to be deemed successful, academics need to carry out research and have it published, and the software and model development process calls for coding, product development and testing.

The broad academic community can contribute hugely to model development, but they do not get credit for writing code in their incentive systems. This effort enables a bridging of those incentive structures and requirements: the laboratory benefits from valuable code, while the non-federal partners get to work on a range of interesting science questions.

Close collaboration

Software development was very much a team project. Members of the scientific community affiliated with government labs, academic institutions and the private sector all helped develop the framework and/or have contributed PODs.

The MDTF, led by David Neelin, professor of atmospheric and oceanic sciences at UCLA, coordinates the individual diagnostic developers at institutions across the USA with the framework development team.

The primary framework software development takes place at NOAA-GFDL and is led by Krasting, Princeton University’s Aparna Radhakrishnan, Thomas Jackson and Jessica Liptak from Science Applications International Corporation, and Wenhao Dong of the University Corporation for Atmospheric Research (UCAR). The framework development team is also supported by NCAR’s Dani Coleman, and Yi-Hung Kuo and Fiaz Ahmed of UCLA.

Other members of the MDTF leads team include Andrew Gettelman (NCAR), Eric Maloney of Colorado State University, Yi Ming (NOAA-GFDL) and Florida State University’s Allison Wing. The framework team and project leads work together to resolve issues with the code, brainstorm ideas for new features and draft plans for future releases of the MDTF diagnostics package.

The entire task force, including POD developers, meets monthly to answer questions, present analyses from PODs and share information about upcoming changes to the code.

The benefits

Uncovering what is working well in models and what can be improved is an important part of making better weather predictions and climate projections. By building stronger connections between the major modeling centers at government labs and the academic and private-sector, the MDTF aims to involve more people in the model development process. The task force hopes that this will ultimately lead to better models and better understanding of how to use the output from them.

The community development approach has enabled the MDTF to cooperatively target processes spanning the entire climate realm, including the atmosphere, land surface, ocean and sea ice.

Availability of the software

The MDTF diagnostics package is available to download from GitHub, a platform designed to coordinate work among programmers who are collaboratively developing source code during software development. Furthermore, the package can be run on a Linux or MacOS desktop or laptop; it does not require access to high-performance computer (HPC) systems or privately hosted data.

Users can run the existing PODs on model output data in NetCDF (network common data form) format, or on sample model output and observational data from public servers. New PODs are added as they are delivered by task force members. The developers say the MDTF diagnostics package is geared toward weather and climate scientists from all sectors.

As with most open-source software, development is never finished. “The MDTF diagnostics package continues to grow and evolve as we incorporate more diagnostics and probe different parts of the weather and climate models,” says Krasting.

The end-user and diagnostic-developer bases are new to Git version-control software. The framework team adapts documentation to meet this type of need from the community, with the task force facilitating the two-way communication between the leads team and POD developers.

In addition to downloading the code from GitHub, installation of the software requires users to download supporting observational data and (optionally) sample model data, currently available via anonymous FTP (file transfer protocol) from UCAR. It also involves using an included script to install required version-controlled third-party libraries and dependencies via the Conda package manager, configuring paths to this data in a settings file and (optionally) conducting a test run of the framework on the sample data to verify the installation. The diagnostics and evaluations team also maintains an up-to-date, site-wide installation of the package for GFDL users, accessible from the post-processing/analysis cluster and workstations.

According to NOAA, the software package has already led to significant advances in model performance, including forecasts of regional precipitation and extreme events such as monsoons. “Many long-standing model biases have been cut roughly by half,” says MDTF lead David Neelin. “These improvements are critical for advancing a number of NOAA priorities,” he concludes.

What next for the Task Force?

In the current phase of the project, the team is anticipating PODs that explore the lifetimes of extra-tropical cyclones, rain rates in tropical cyclones, warm rain microphysics, distributions of surface-air temperature extremes and precipitation, thermodynamic-precipitation relationships, and radiative feedbacks of climate forcings. The MDTF diagnostics package also includes ocean PODs that target comparatively slower processes such as tropical Pacific sea-level trends and variability, and the impacts of sea-ice loss on radiative budgets.

The package is evolving. It has eight different diagnostics implemented, 11 under development to be included by 2022 and 28 proposed, which may be implemented by 2024. Proposed areas of focus include precipitation-buoyancy diagnostics, surface-flux diagnostics and the moist static energy variance budget of tropical cyclones. The current set of diagnostics is weighted toward the atmosphere, and the task force hopes to expand it to incorporate more components of the Earth system. This will involve new challenges as it encounters different model grids and data structures.

“We are working toward integrating the MDTF diagnostics package into the modeling center workflows at GFDL and NCAR to enable the package to run on more model experiments and configurations. We are also looking at ways of analyzing multiple simulations at the same time,” explains GFDL’s John Krasting.

On the software engineering side, the task force is exploring the use of container management software to easily and securely deploy the software on workstations and high-performance computing cluster- and cloud-based systems. Developers are also implementing continuous integration testing using GitHub Actions so that every pull request triggers end-to-end testing before the framework team reviews the code.

When asked what other meteorology-related projects its members are working on, the task force response is: “The sky is the limit.”